Alibaba’s New Qwen 3.5 Small AI Model Can Run Directly On an iPhone 17

Alibaba has just taken a significant step forward by introducing a line of small AI models that will fit perfectly into your existing smartphone. Qwen 3.5’s smaller cousins pack a punch that used to take a full server configuration to deliver, and even the smallest of them will work flawlessly on your iPhone 17.

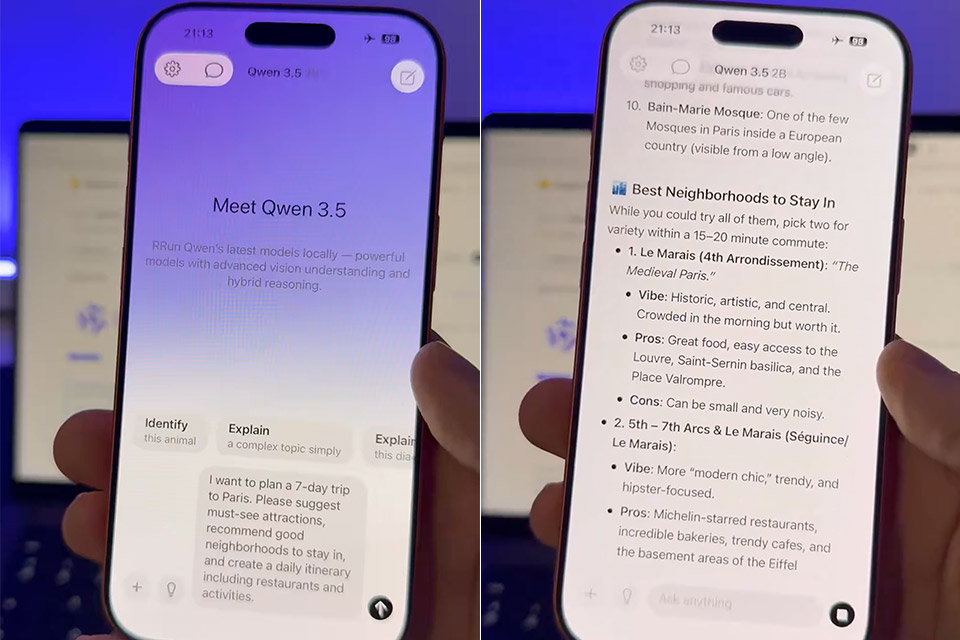

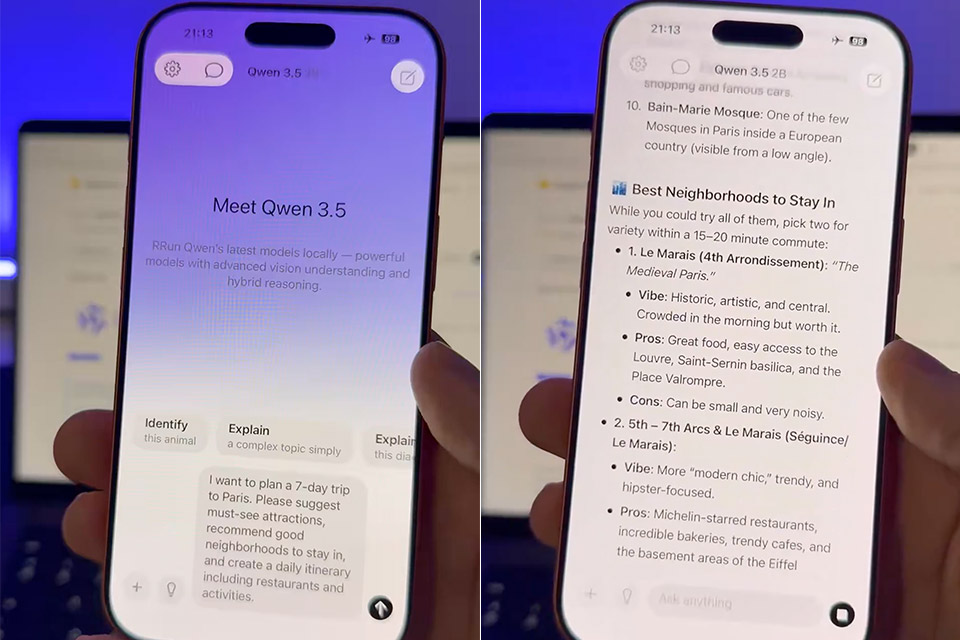

The new Qwen 3.5 by @Alibaba_Qwen running on-device on iPhone 17 Pro.

Qwen 3.5 beats models 4 times its size, has strong visual understanding, and can toggle reasoning on or off.

The 2B 6-bit model here is running with MLX optimized for Apple Silicon. pic.twitter.com/GsGGzur0og

— Adrien Grondin (@adrgrondin) March 2, 2026

Alibaba’s Qwen team introduced four new models today: 0.8 billion, 2 billion, 4 billion, and 9 billion parameter versions. Built on the same upgraded architecture as the bigger Qwen 3.5 series, which launched in February 2026, these smaller versions were built for efficiency and can natively handle both text and graphics. The 0.8B and 2B versions are ideal for phones, laptops, and edge hardware where memory and battery life are critical, while the 4B is designed for lightweight tasks, and the 9B model approaches the capabilities of larger models in terms of reasoning, math, multilingual knowledge, and document analysis.

Plaud Note Pro AI Voice Recorder, Transcribe & Summarize with AI, App Control, Note Taker for Meetings…

- AI-POWERED TRANSCRIPTION & MULTI-DIMENSIONAL SUMMARIES: Plaud Note Pro is your professional voice transcriber, delivering high-accuracy transcription…

- ENHANCED CONTEXT WITH MULTIMODAL INPUT: Capture audio, type notes, add images, and press to highlight key moments for richer context. During…

- CHAT WITH YOUR RECORDINGS USING “ASK Plaud”: Unlock deeper insights with this interactive AI. Ask questions, extract key points, draft emails, and get…

The results are pretty mind-blowing, as Alibaba claims that the 9B variant produces results that are almost on par with systems with 120 billion parameters, implying that it matches (and in some cases outperforms) the capabilities of big hitters like ChatGPT and Gemini in a series of critical tests. In contrast, the 4B variation performs at levels comparable to prior 80B models. They may have sacrificed depth in favor of speed and resource economy, but they can still perform basic picture recognition and text.

Community testing has demonstrated that these models can be run on a mobile device using tools such as MLX, with some even managing to fit a quantized version of the 2B model into an iPhone 17. You receive lightning-fast responses without having to go online or pay for subscription fees or data transfer to servers. The 0.8B and 2B variants are offline-ready and compatible with normal phones, and users say that the 4B model delivers nearly as much power in real-world use as the larger models. Plus, with open-source weights on Hugging Face and ModelScope, deployment is quite simple using familiar frameworks. You can find them all here.

Alibaba’s New Qwen 3.5 Small AI Model Can Run Directly On an iPhone 17

#Alibabas #Qwen #Small #Model #Run #iPhone